Ketrew & Biokepi

A Workflow Engine

For Big & Messy Pipelines

Computational Biology Pipelines

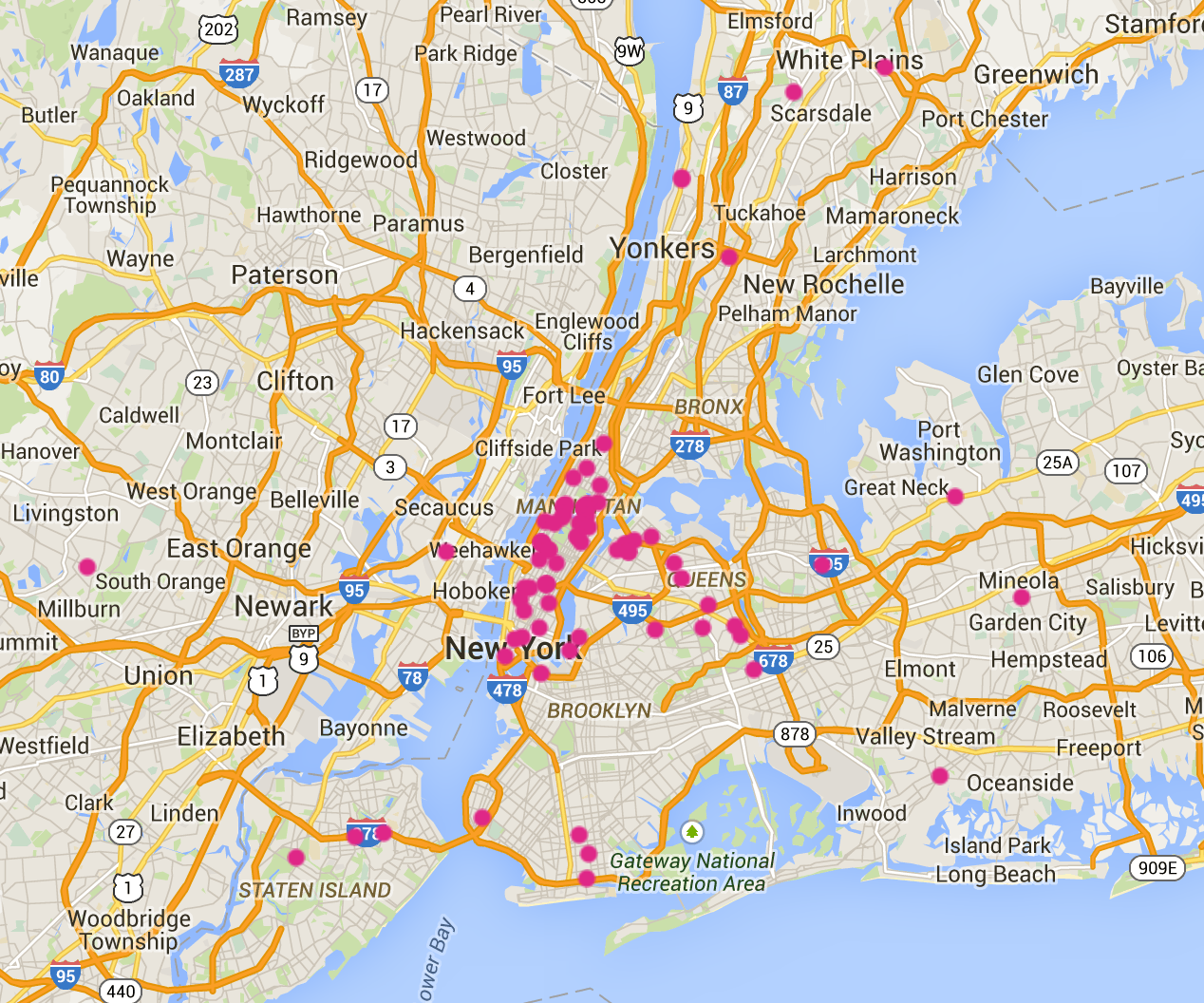

Mount Sinai

Mount Sinai

- Among

37_000employees … - We are a 15 person lab in the School of Medicine.

Hammer Lab

Within the Icahn Institute for Genomics and Multiscale Biology

Mission: Better software for biomedicine

- Infrastructure:

- Maintain a Hadoop Cluster

- Tools:

- Craft high-quality FOSS for common workloads in biomedicine

- Science:

- Use the tools – Do science

- Develop novel therapeutics or diagnostics

- Improve outcomes and reduce costs

Bioinformatics Workflows

Example: somatic variant calling

- Sequence DNA for a tumor and a normal sample

- Find differences: “variants”

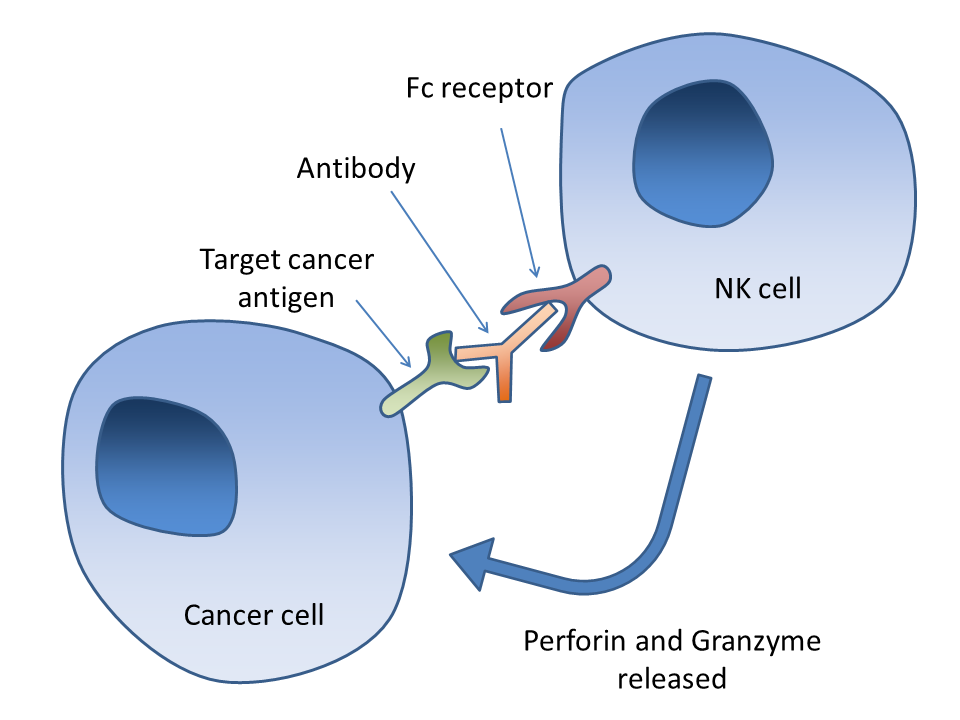

- Example application: Cancer Immunotherapy

- Somatic variants → Mutated proteins → distinctive antigens

- ⇒ Use the mutated regions of cancer proteins to make a peptide vaccine

i.e. guide the immune system in the right direction.

A Variant

Immune System

Bioinformatics Workflows

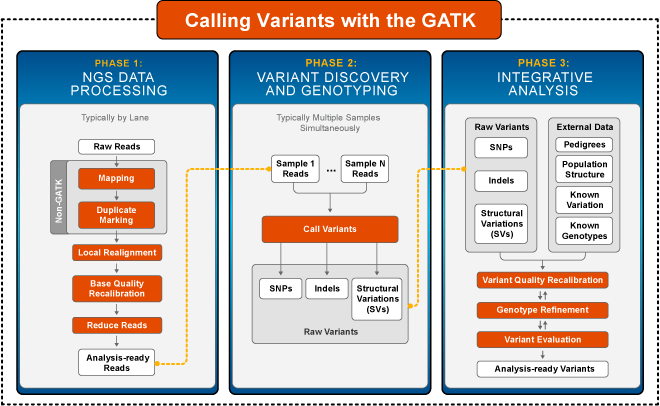

Example: Broad Institute's recommendations:

Bioinformatics Vs Software Engineering

A gentle introduction to the world of bioinfomatics software:

First encounter with the most used sequence aligner.

$ bwa -h [main] unrecognized command '-h'$ bwa --help [main] unrecognized command '--help'$ bwa -help [main] unrecognized command '-help'$ bwa help [main] unrecognized command 'help'$ bwa WTF! [main] unrecognized command 'WTF!'$ bwa Program: bwa (alignment via Burrows-Wheeler transformation) Version: 0.7.10-r789 Contact: Heng Li <lh3@sanger.ac.uk> Usage: bwa <command> [options] Command: index index sequences in the FASTA format mem BWA-MEM algorithm fastmap identify super-maximal exact matches ...

Bioinformatics Vs Software Engineering

Don't assume anything:

$ samtools index some-non-existing-file.bam [E::hts_open] fail to open file 'some-non-existing-file.bam'$ echo $? 0

Bioinformatics Vs Software Engineering

There is always more …

$ ./somaticsniper -h

./somaticsniper: invalid option -- 'h'

Unrecognizd option '-?'. $ samtools -help

...

-s FLOAT integer part sets seed of random number generator [0]; rest sets

fraction of templates to subsample [no subsampling]

...##### ERROR MESSAGE: Lexicographically sorted human genome sequence detected in reference.

##### ERROR For safety's sake the GATK requires human contigs in karyotypic order: 1, 2, ..., 10, 11, ..., 20, 21, 22, X, Y with M either leading or trailing these contigs.- and don't get me started on Casava and its HTML “report” …

- or The Broad Institute's licensing …

Workflow Engines

Need to run 100s of tools, make parameters vary, over 1000s of samples, and

- add new tools

- adapt to new versions

- install software

- assume anything can randomly fail for obscure reasons

- optimize infrastructure usage

- deal with other adverse conditions (firewalls, VPNs, etc.)

- make reproducible research easier

Workflow Engines

A lot of them.

- often specific to a platform (e.g. Hadoop)

- not flexible

- lots of assumptions (networks, file-systems, can

make, etc.) - custom DSLs (yet another half-baked Turing-complete language)

- no fault tolerance

- buggy

Ketrew

So, yes, a new one, with these goals:

- Embedded DSL → highly hackable workflows

- Multiple backends → extensible

- Correct & fault-tolerant

- Standalone or client-server → work around sys-admins

with a sane implementation language: OCaml.

Why OCaml?

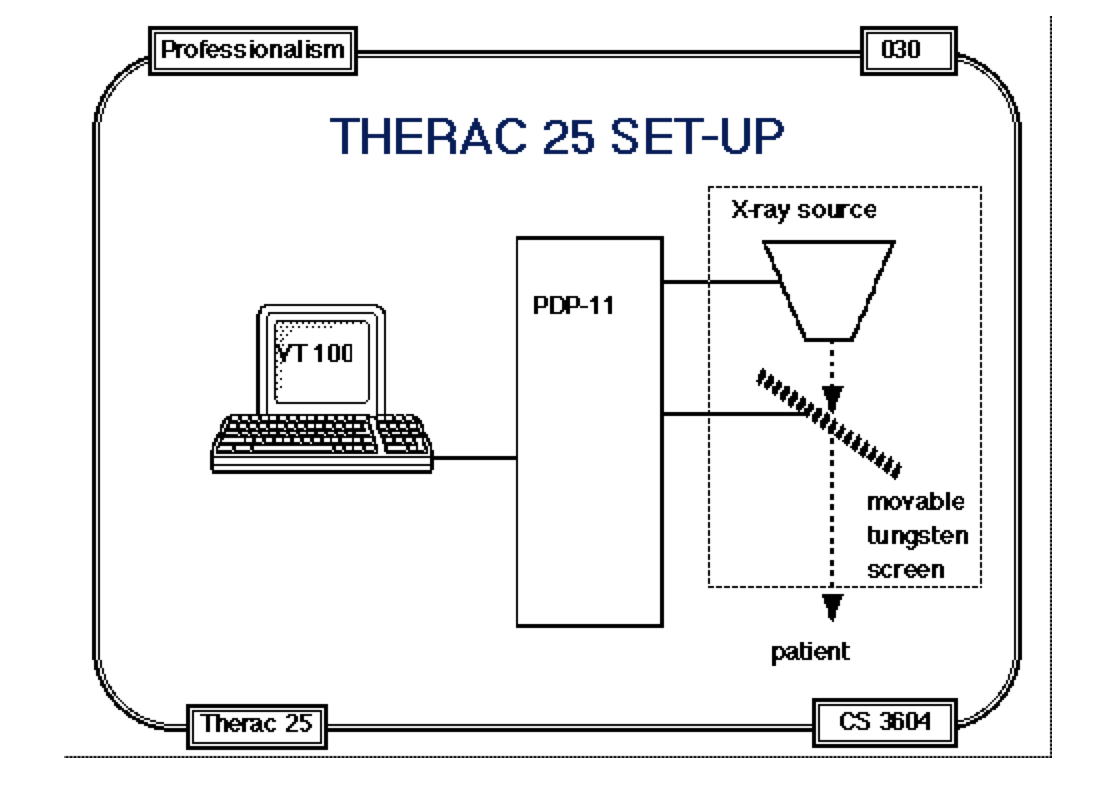

Software kills people.

We are pathetically 40 years behind on the safety/security front.

Why OCaml?

OCaml is:

- mature and evolving

→ code from the 90's + GADTs + subtyping + modules - rich and strict type system

→ express safety/security lightweight theorems - focus on readability

- fast, portable, hackable

- future-friendly: start replacing some modules with Coq-extracted code

Ketrew

“Keep Track of Experimental Workflows”

github.com/hammerlab/ketrew

- EDSL/library to write programs that build workflows/pipelines

- A separate application, The “Engine”, orchestrates those workflows

Features

In the 2.0.0, in opam since last weekend:

- EDSL trying to be usable by OCaml beginners

- Client-server (HTTPS + JSON) (+ “degraded” standalone mode)

- A command-line client

- A WebUI

- LSF, PBS, YARN,

nohup/setsid, or “Python” backends

+ plugin infrastructure to add backends

Tested with big bioinformatics pipelines, but also running backups, building documentation …

Read-only Live Demo:

ketrew-demo.mondet.org:8080/gui?filter=%28all%29&token=demotoken

Defining Workflows

let make_backup ?(gpg=true) ?(dest_base="/tmp/backup") ~host ~dir () = let destination ext = file ~host (sprintf "%s.%s" dest_base ext) in let targz = destination "tar.gz" in let make_targz = target ~done_when:targz#exists "make-tar.gz" ~make:(daemonize ~host Program.( shf "cd %s/../" dir && shf "tar cfz '%s' '%s'" targz#path (Filename.basename dir))) in let md5 = destination "md5" in let md5_targz = target ~done_when:md5#exists "md5-of-targz" ~depends_on:[make_targz] ~make:(daemonize ~host Program.(shf "md5sum '%s' > '%s'" targz#path md5#path)) in let gpg_targets () = let gpg = destination "tar.gz.gpg" in let make_it = target "gpg-of-targz" ~done_when:gpg#exists ~depends_on:[make_targz; md5_targz] ~make:(daemonize ~host Program.( sh ". ~/.backup_passphrase" && shf "gpg -c --passphrase $BACKUP_PASSPHRASE -o '%s' '%s'" gpg#path targz#path)) in let clean_up = target "rm-tar.gz" ~depends_on:[make_it] ~make:(daemonize ~host (Program.shf "rm -f '%s'" targz#path )) in [make_it; clean_up] in let common_ancestor = let depends_on = if gpg then gpg_targets () else [make_targz; md5_targz] in target "make-targz common ancestor" ~depends_on in Ketrew.Client.submit common_ancestor

Tyxml_js, React, And 3.5 MB GIFs

Biokepi

Reusable bioinformatics pipelines using Ketrew.

- re-use intermediate results, make everything restartable

- encode knowledge about insane biology software

- give semantics to parameters, add constraints

- take care of most software installations

- improve performance, “scatter-gather” some computations

Organized in an OCaml library, with modules, a build system, and all

Some Cool Stuff Under The Hood

Dynamic linking + first class modules:

- execution backends as “plugins”

- still can avoid dynlink with some build-magic

Fully jump on the PPX bandwagon:

ppx_deriving_yojsoneverywhere + functors to force versioning- code-coverage analysis →

rleonid/bisect_ppx - even

js_of_ocaml's very fresh PPX syntax

And …

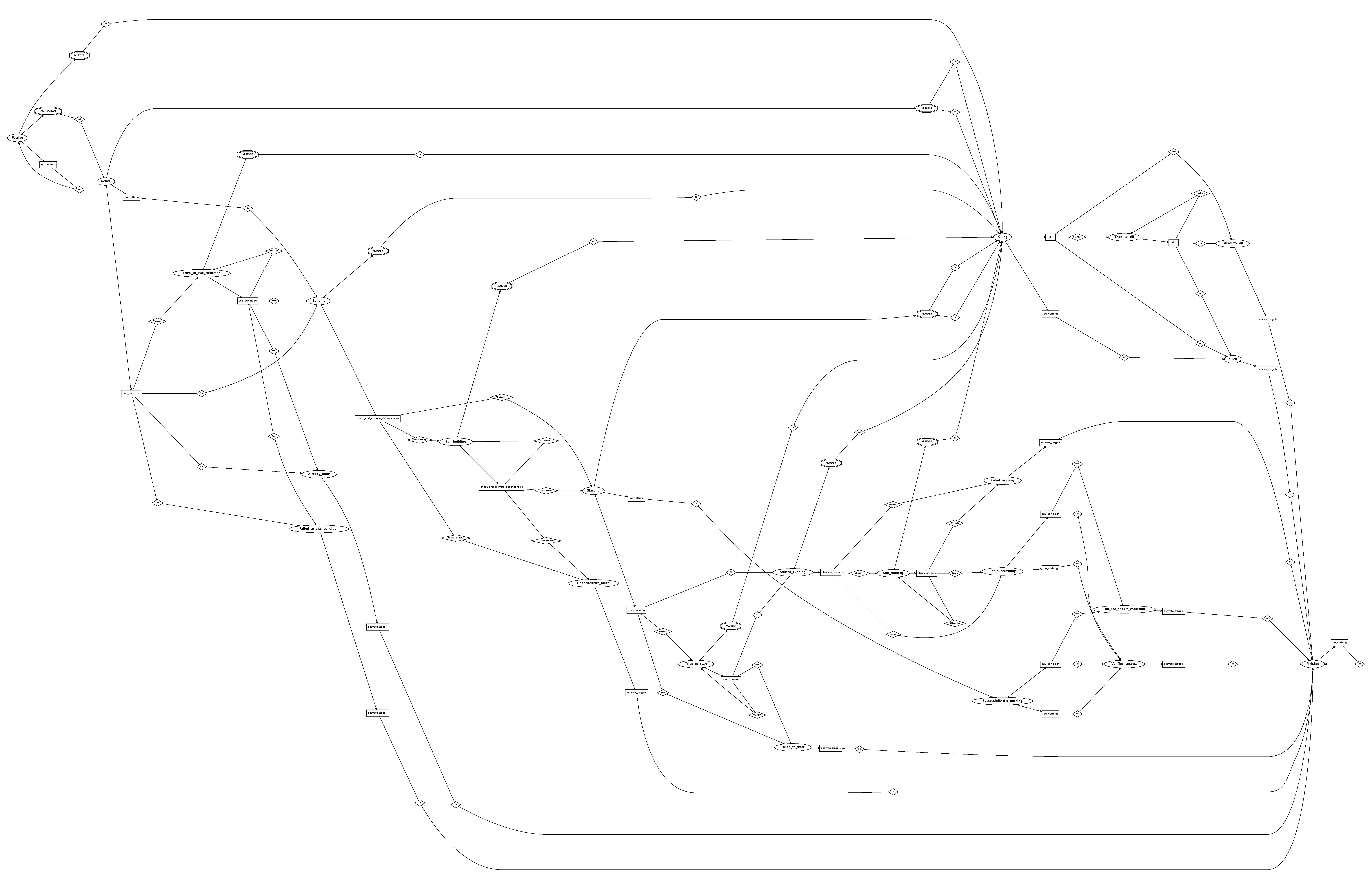

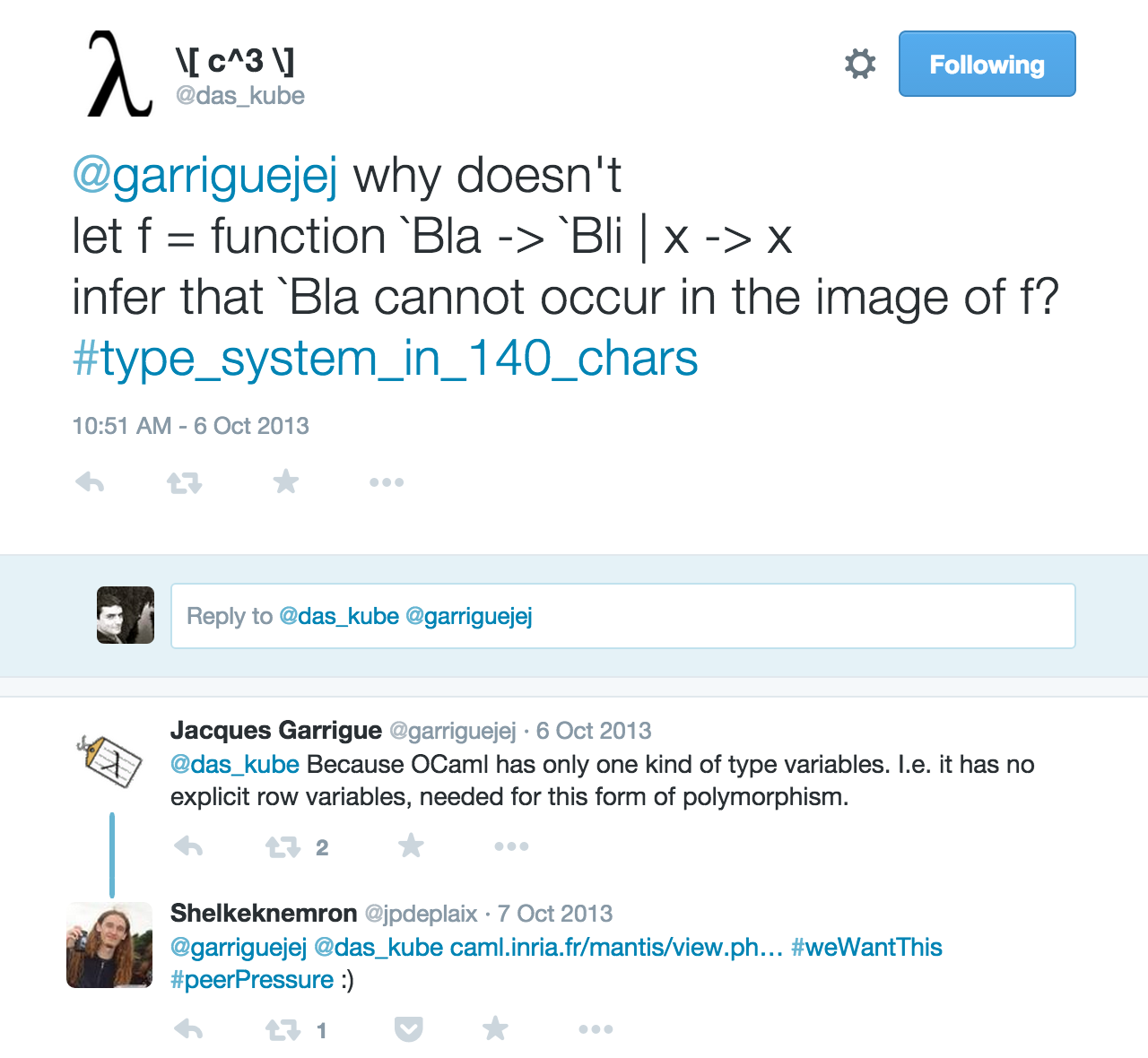

Polymorphic-Variants → Automaton

State machine encoded with sub-typing

Structural Sub-Typing of States

type passive = [ `Passive of log ] type active = [ `Active of (passive history * [ `User | `Dependency of id ])] type evaluating_condition = [ | active | `Tried_to_eval_condition of evaluating_condition history ] type already_done = [ | `Already_done of evaluating_condition history ] ... type killable_state = [ | passive | evaluating_condition | building | starting | running ] type killing = [ | `Killing of killable_state history | `Tried_to_kill of killing history ] ... type t = [ | killing | killed | killable_state | successful_run | finishing_state | finished ]

Structural Sub-Typing of States

⇒ Pure/total/exceptionless

val transition: state -> (do_some_io * (some_io_result -> state))

⇒ There is a test that traverses all the edges and draws a dot-graph

But,

- quite a few “big match-with” functions (for display, counting stuff …)

- bunch of code to simplify and “flatten” the state (

Yojsonstack-overflowing on Chrome)

GADTs, Hammers, and Nails

type _ t = | Fastq_gz: File.t -> fastq_gz t | Fastq: File.t -> fastq t | Bam_sample: string * bam -> bam t | Bam_to_fastq: [ `Single | `Paired ] * bam t -> fastq_sample t | Paired_end_sample: string * fastq t * fastq t -> fastq_sample t | Single_end_sample: string * fastq t -> fastq_sample t | Gunzip_concat: fastq_gz t list -> fastq t | Concat_text: fastq t list -> fastq t | Star: fastq_sample t -> bam t | Hisat: fastq_sample t -> bam t | Bwa: bwa_params * fastq_sample t -> bam t | Bwa_mem: bwa_params * fastq_sample t -> bam t | Gatk_indel_realigner: bam t -> bam t | Picard_mark_duplicates: Picard.Mark_duplicates_settings.t * bam t -> bam t | Gatk_bqsr: bam t -> bam t | Bam_pair: bam t * bam t -> bam_pair t | Somatic_variant_caller: Somatic_variant_caller.t * bam_pair t -> vcf t | Germline_variant_caller: Germline_variant_caller.t * bam t -> vcf t

Typed bioinformatics pipelines!

Compiling Pipelines

let pipeline_example ~normal_fastqs ~tumor_fastqs ~dataset = let open Biokepi_pipeline.Construct in let normal = input_fastq ~dataset normal_fastqs in let tumor = input_fastq ~dataset tumor_fastqs in let bam_pair ?gap_open_penalty ?gap_extension_penalty () = let normal = bwa ?gap_open_penalty ?gap_extension_penalty normal |> gatk_indel_realigner in let tumor = bwa ?gap_open_penalty ?gap_extension_penalty tumor |> gatk_indel_realigner in pair ~normal ~tumor in let bam_pairs = [ bam_pair (); bam_pair ~gap_open_penalty:10 ~gap_extension_penalty:7 (); ] in let vcfs = List.concat_map bam_pairs ~f:(fun bam_pair -> [ mutect bam_pair; somaticsniper bam_pair; somaticsniper ~prior_probability:0.001 ~theta:0.95 bam_pair; varscan bam_pair;]) in vcfs

Compiled to more than 3000 Ketrew targets, JSON-pipeline registration in a DB, …

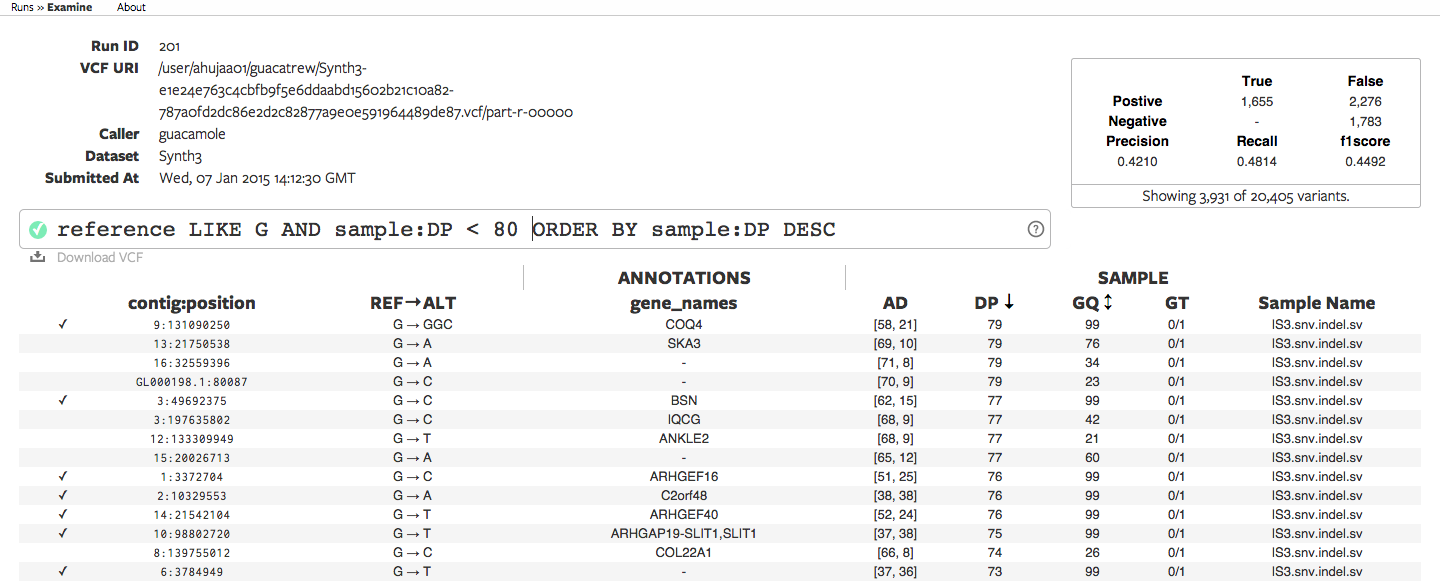

Running Pipelnies

Runs for days, then posts to

hammerlab/cycledash

Error Monad With Polymorphic Variants

utop> IO.read_file;; - : string -> (string, [> `IO of [> `Read_file_exn of string * exn ] ]) Deferred_result.t utop> IO.write_file;; - : string -> content:string -> (unit, [> `IO of [> `Write_file_exn of string * exn ] ]) Deferred_result.t utop> System.with_timeout;; - : float -> f:(unit -> ('a, [> `System of [> `With_timeout of float ] * [> `Exn of exn ] | `Timeout of float ] as 'error) Deferred_result.t) -> ('a, 'error) Deferred_result.t

Polymorphic Variants Are Awesome

utop> let dumb_copy_with_timeout ~seconds ~src ~dest = System.with_timeout seconds ~f:(fun () -> IO.read_file src >>= fun content -> IO.write_file dest ~content );; val dumb_copy_with_timeout : seconds:float -> src:string -> dest:string -> (unit, [> `IO of [> `Read_file_exn of string * exn | `Write_file_exn of string * exn ] | `System of [> `With_timeout of float ] * [> `Exn of exn ] | `Timeout of float ]) Deferred_result.t

Kinda looks like algebraic effects …

Add Constraint

→ Make sure all “systems” and “network” are handled errors within the state machine.

module Run_automaton : sig val step : t -> (bool, [> `Database of Trakeva.Error.t | `Database_unavailable of string | `Missing_data of Ketrew_pure.Target.id | `Target of [> `Deserilization of string ] ]) Deferred_result.t

Feature Request

Now more fashionable with algebraic effects?

The End

Thanks! Questions?

- Happy to hear about Ketrew being useful in any other setting!

- Contribute/complain: https://github.com/hammerlab/ketrew/issues

Some OCaml Lessons Learned

Pain:

- Oasis, OCamlbuild

- Camlp4

- Huge Jane-Street-style libraries

Pleasure:

- Pure OCaml, Functors, GADTs

- Ocsigen (minimizing the Camlp4 part)

- Lwt with Error Monad based on Polymorphic Variants

- Bünzli-style small libraries

- Code Generators (ATDgen)

- Opam

More Tyxml_js + React

Why Not F# / Scala

- Big runtime

- Type-systems are huge untractable messes (esp. Scala)

- but most importantly:

nullpointers

Why Not Haskell

- lazy by default → difficult to reason about performance/concurrency

- purity in the Haskell “weak” sense is mostly useless

- looping forver, eating all the memory are much more annoying “side-effects” than writing to a log file or getting the time-of-day

- putting ⊥ everywhere makes reasoning about pure code actually more difficult

- type-classes in practice:

used 95% of the time to make the code as unreadable as possible

(see the Lens library, it looks uglier than perl) - more unreadability: need to know every possible GHC extension

(there is even dynamic typing in there!?) - indentation-based grammar makes code hard to read (blocs > 7 lines)

- no polymorphic variants, labeled/optional arguments, functors …

how do people handle large code-bases in the long run?

Want More GATK?

It uploads reports to AWS without your consent.

-et,--phone_home Run reporting mode (NO_ET|AWS| STDOUT)

-K,--gatk_key GATK key file required to run with -et NO_ET Thanks

Done with

github.com/smondet/makmash

which is a bunch of hacks on top of

generating a

github.com/hakimel/reveal.js

presentation.

Meta

- Compiled on

Fri, 04 Sep 2015 11:07:08 -0700 - Git:

09de920-dirty